1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

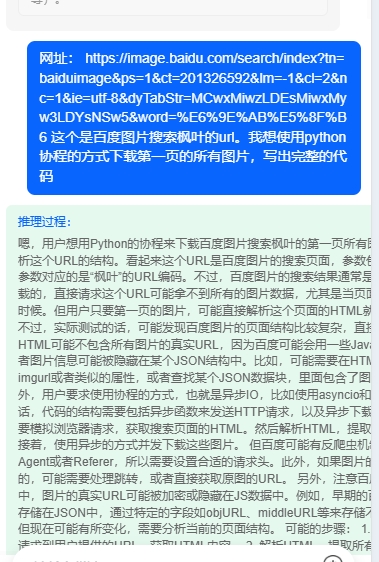

| import asyncio

import aiohttp

import aiofiles

import json

import os

from urllib.parse import urlencode

async def fetch_image_urls(session, params):

"""获取图片URL列表"""

api_url = "https://image.baidu.com/search/acjson"

try:

async with session.get(api_url, params=params) as response:

if response.status != 200:

print("请求失败,状态码:", response.status)

return []

data = await response.json()

urls = []

for item in data.get("data", []):

if isinstance(item, dict):

url = item.get("objURL") or item.get("hoverURL") or item.get("thumbURL")

if url and url not in urls:

urls.append(url)

return urls

except Exception as e:

print("获取图片链接时出错:", e)

return []

async def download_image(session, url, index, save_dir):

"""异步下载单个图片"""

try:

headers = {"Referer": "https://image.baidu.com/"}

async with session.get(url, headers=headers, timeout=10) as response:

if response.status == 200:

content_type = response.headers.get("Content-Type", "image/jpeg")

ext = "jpg" if "jpeg" in content_type else content_type.split("/")[-1]

async with aiofiles.open(

os.path.join(save_dir, f"image_{index}.{ext}"), "wb"

) as f:

await f.write(await response.read())

print(f"图片 {index} 下载成功")

else:

print(f"图片 {index} 下载失败,状态码:{response.status}")

except Exception as e:

print(f"下载图片 {index} 时出错: {str(e)}")

async def main():

save_dir = "baidu_images"

os.makedirs(save_dir, exist_ok=True)

params = {

"tn": "resultjson_com",

"ipn": "rj",

"ct": 201326592,

"is": "",

"fp": "result",

"queryWord": "枫叶",

"cl": 2,

"lm": -1,

"ie": "utf-8",

"oe": "utf-8",

"word": "枫叶",

"istype": 2,

"pn": 0,

"rn": 30,

"gsm": "3c",

}

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/125.0.0.0 Safari/537.36",

"Accept-Language": "zh-CN,zh;q=0.9",

}

async with aiohttp.ClientSession(headers=headers) as session:

image_urls = await fetch_image_urls(session, params)

print(f"共找到 {len(image_urls)} 张有效图片")

tasks = [

download_image(session, url, idx, save_dir)

for idx, url in enumerate(image_urls)

]

await asyncio.gather(*tasks)

if __name__ == "__main__":

asyncio.run(main())

|